Article Content The current paradigm of AI deployment treats large language models (LLMs) as the “brain” of the system—the reasoning engine that decides, plans, and executes. This is fundamentally flawed. In systems engineering, we avoid placing non-deterministic components in control positions. We build systems that are predictable, testable, and robust.

The Fallacy of the Intelligent Agent We often anthropomorphize LLMs. When we give a model access to tools or a database, we treat it as an autonomous agent. However, an LLM is a probabilistic engine, not a state machine. It does not possess an internal model of correctness; it possesses a model of linguistic probability. Treating it as a brain leads to “prompt engineering” as a substitute for system architecture.

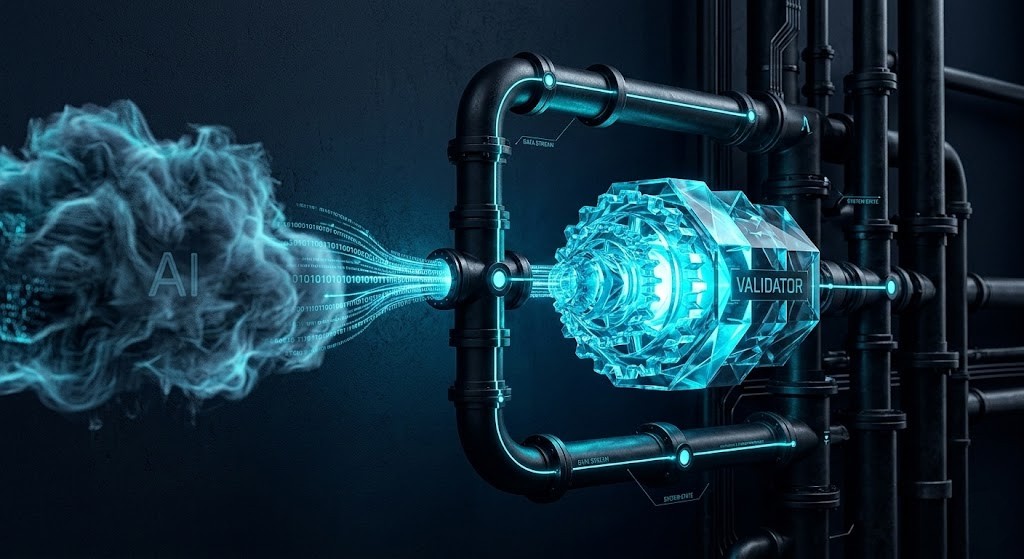

The Pipeline as a Worker Instead of the brain, treat the AI as a worker—a stateless, non-deterministic microservice. In this architecture, the AI takes an input, processes it, and outputs a candidate result. Crucially, this result is just a suggestion.

Plaintext

+----------+ +-----------+ +------------+ +-----------+

| Input | ---> | AI Worker | ---> | Validator | ---> | Execution |

| (Prompt) | | (Non-Det) | | (Det) | | (System) |

+----------+ +-----------+ +------------+ +-----------+

│

└---> [Reject/Retry]

Validation as a Deterministic Gate The “Worker” model requires a strict “Governance Layer.” If the AI worker suggests an action, that action must pass through a validator. This validator is not AI. It is a deterministic script, a schema checker, or a state-machine that verifies: “Does this action violate system constraints?”

The Cost of Non-Determinism When we treat the AI as the brain, we inherit its non-determinism. We see failure modes like hallucination, prompt injection, and catastrophic forgetting. By relegating the AI to a “Worker” status, we sandbox the non-determinism. If the AI worker fails, the system doesn’t crash; the validator rejects the output, and we retry or fail gracefully.

Conclusion Building reliable AI systems requires us to strip the AI of its role as the decision-maker. It is a tool. We must build the control plane around it, not inside it.